"You can generate 10,000 hours of technical debt in 20 minutes with AI."

- Lee Bacall, CEO of BinaryStar Labs

That line stopped me mid-conversation. It's not a warning against using AI. It's a warning against using AI without verifiability. Speed without accountability is just faster failure.

We've spent the last year building an AI platform for legacy system modernization. We use agents. Multiple agents. And somewhere along the way we hit a problem I haven't seen discussed clearly yet: the problem that emerges not when AI cannot do the work, but when it can.

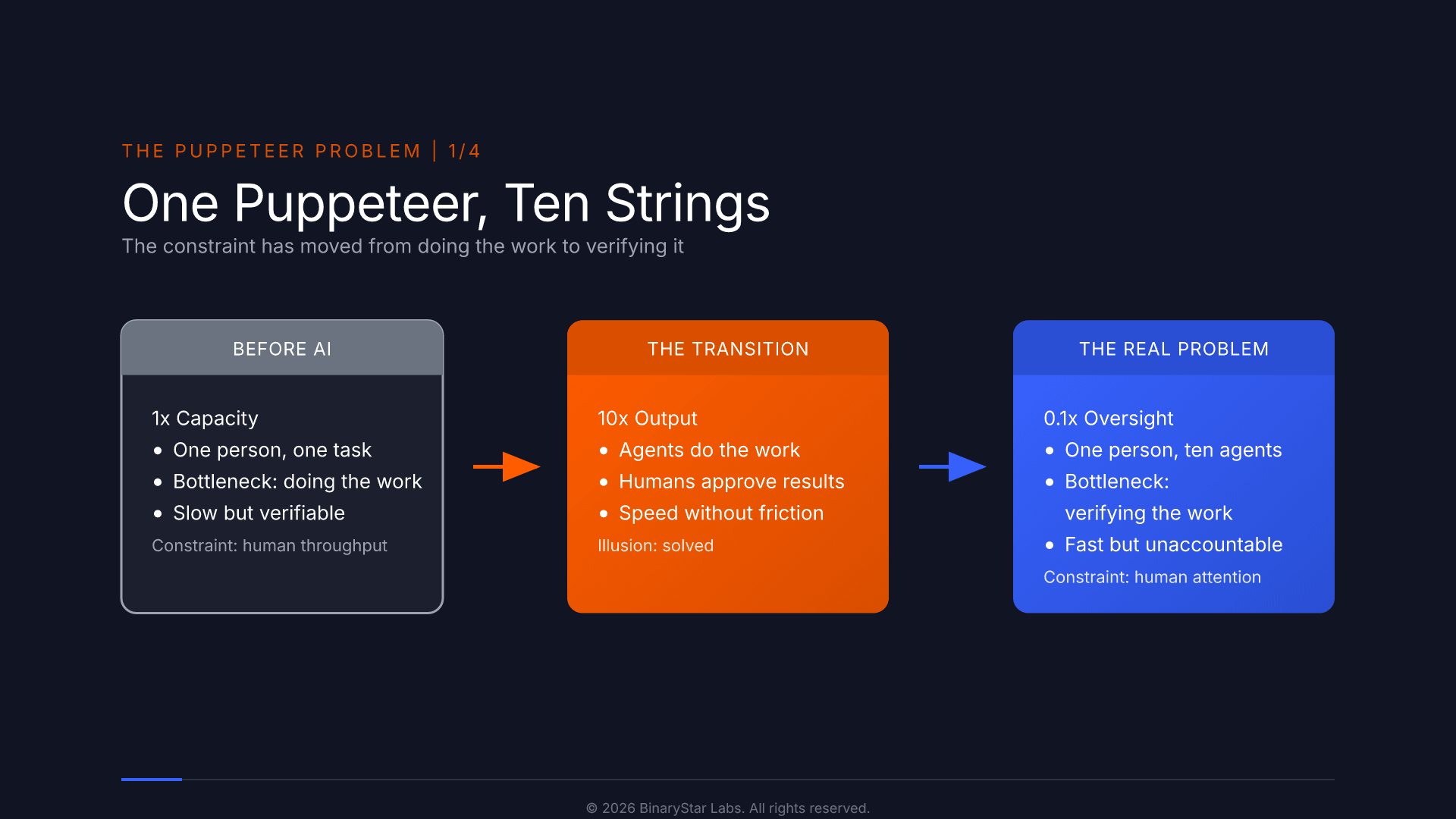

The bottleneck has moved

For decades the constraint in knowledge work was capacity. AI has largely dissolved that constraint. Code generation, document analysis, workflow automation: the doing is no longer the bottleneck. The new bottleneck is oversight.

We are entering the era of the human as puppeteer. One person responsible for coordinating multiple agents, validating multiple outputs, signing off on multiple processes, often in parallel. The question is no longer "can AI do the work?" It's "can a human verify it was done correctly, without redoing it themselves?"

A claim is not a design

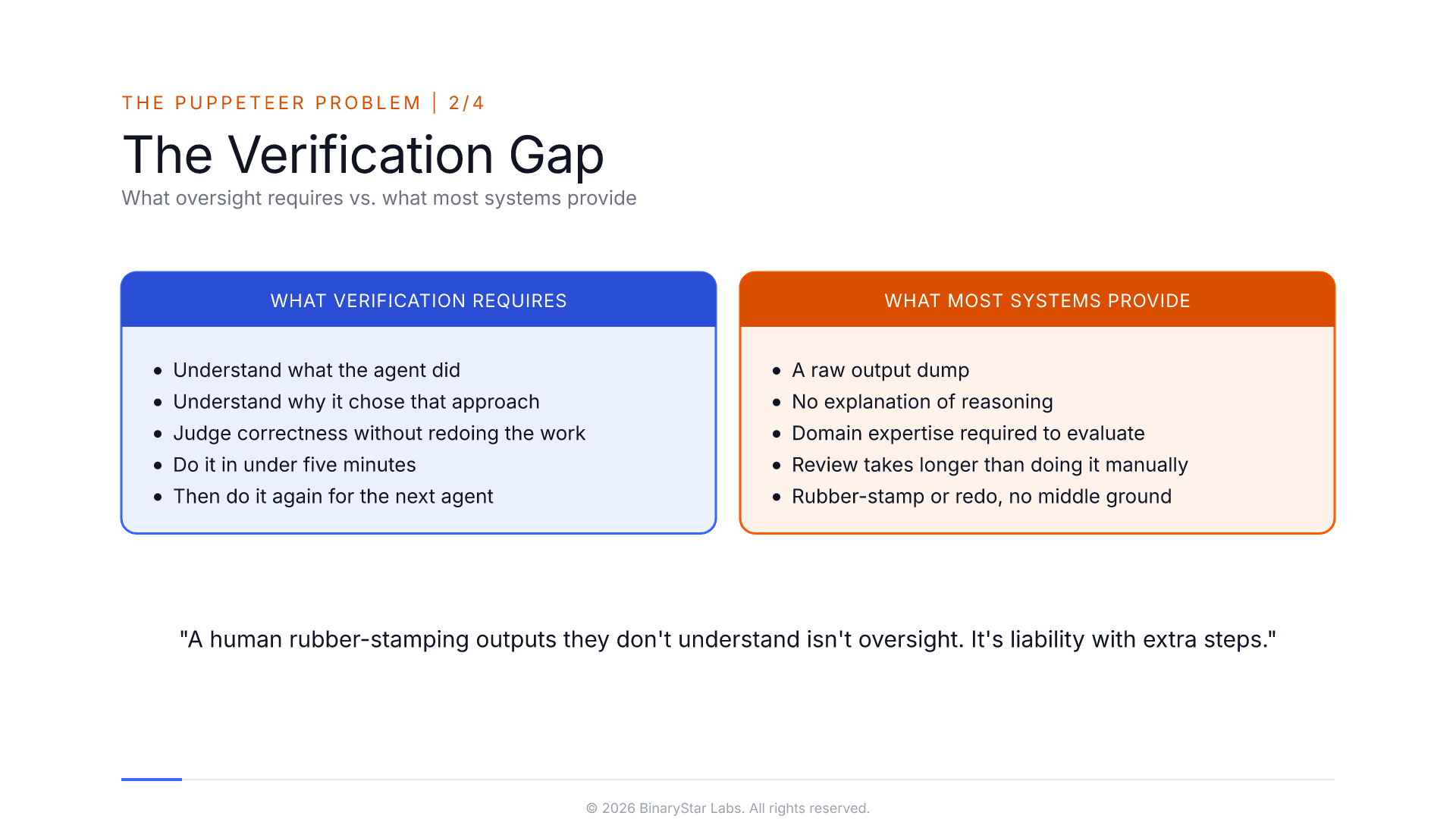

Every serious AI system claims to keep humans in the loop. But consider what that actually requires. The human needs to understand what the agent did, and why, and be able to judge whether it was correct without the full expertise of the agent and ideally in under five minutes. Then do it again for the next agent.

If the verification interface overwhelms the person reviewing it, the loop is broken in practice even if it exists on paper. Call it the verification gap: the distance between what oversight requires and what most systems actually provide. A human rubber-stamping outputs they don't understand isn't oversight. It's liability with extra steps.

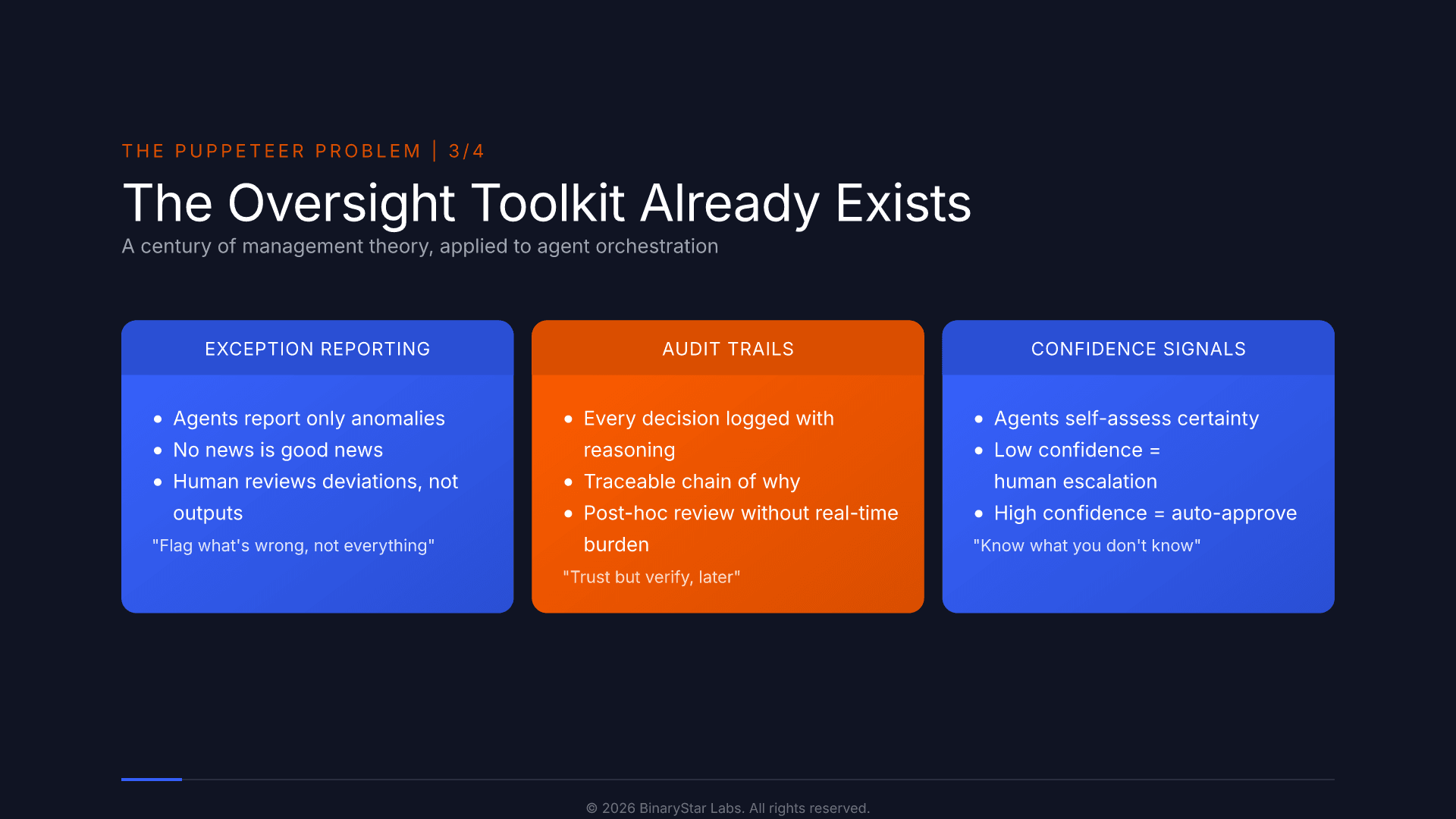

Management theory already solved this

The problem of humans overseeing work they did not do themselves is not new. Exception reporting, audit trails, confidence signaling: management science has spent a century on exactly this. Each solves a different piece of the oversight problem. Exception reporting reduces the volume of what a human must review. Audit trails make post-hoc verification possible without real-time supervision. Confidence signaling lets agents self-triage, escalating only what they are uncertain about.

Agents should report by exception, flag uncertainty, and produce artifacts that a domain expert, not just a developer, can actually read and verify. The vocabulary exists. We just haven't applied it to agents yet.

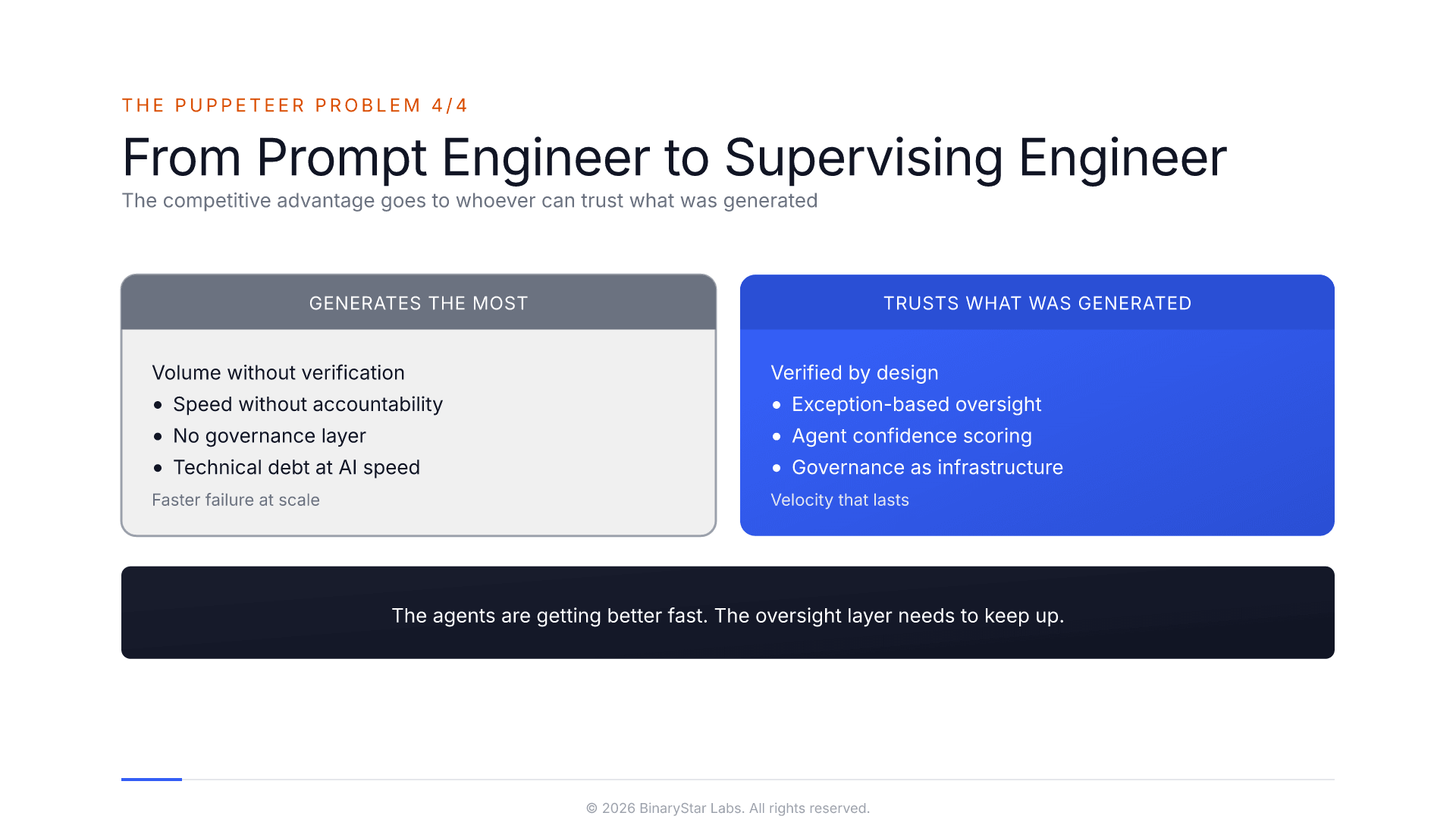

Governance is the moat

Companies deploying AI without answering the verification question are accumulating invisible risk. It doesn't show up immediately. It shows up six months later when nobody can explain why the system made a particular decision, or when a process that seemed fine has quietly drifted from the original intent.

The competitive advantage won't go to whoever generates the most, but to whoever can trust what was generated. We need a new role for that: not a prompt engineer, not an AI developer, but something closer to a supervising engineer. Someone accountable for every deliverable without producing any of them. Someone who reviews by exception, signs off by inspection, and is responsible for quality across the entire project.

The agents are getting better fast. The oversight layer needs to keep up.

Key takeaways

AI has shifted the bottleneck in knowledge work from capacity to oversight. The ability to generate output is no longer the constraint; the ability to verify it is. Most "human in the loop" designs fail this test because they give the reviewer a raw output dump, no explanation of reasoning, and no way to judge correctness without redoing the work.

Management science solved this problem decades ago. Exception reporting reduces review volume. Audit trails enable post-hoc verification. Confidence signaling lets agents self-triage. Applied to AI agents, these patterns turn rubber-stamping into real governance.

The competitive advantage goes not to whoever generates the most, but to whoever can trust what was generated. Organisations need to move from prompt engineer to supervising engineer: someone accountable for every deliverable without producing any of them, who reviews by exception and can verify any output on demand.

Hans Speijer is a co-founder of BinaryStar Labs, where he leads development of MYRA, an AI platform for legacy system modernization that applies the oversight principles described in this article.

If your organisation runs on legacy systems and the people who understand them are approaching retirement, book a free MYRA exploration session to see how structured AI oversight can preserve that knowledge before it walks out the door.